Linear Classification¶

In this section, we will cover the fundamentals of linear classification through a simple ML algorithm, the Perceptron. In addition, we will extend the concepts behind the Perceptron algorithm by considering aspects of regularization to build a margin linear classifier.

Hyperplanes¶

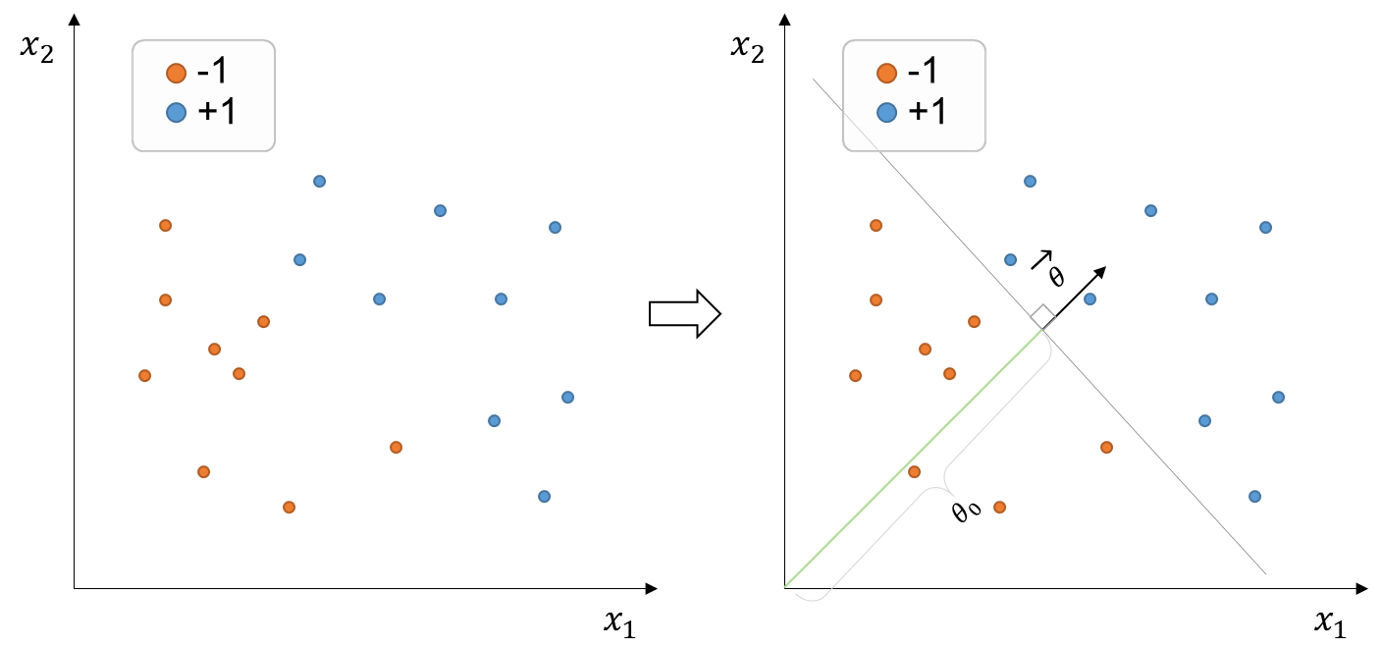

Suppose we wish to classify positive and negative objects from the training set of points below (figure on the left):

Figure 1:Training set of points with binary labels (+1, -1) and two-dimensional features. The decision boundary (grey line) is defined by the parameter vector , which is normal to the decision boundary, and offset parameter that linearly separates the data.

The dataset above is considered linearly separable because it exists at least one linear decision boundary capable of splitting the entire dataset correctly. For instance, we could pass a decision boundary like the grey line above (figure on the right)

In this case, since the features , that is, the feature set belongs to the two-dimensional space, the decision boundary constitutes a line. If we were dealing with a set of features in the three-dimensional space , the decision boundary would be a plane. Analogously, if our feature set were in a higher-dimensional space, the decision boundary would constitute a hyperplane.

A hyperplane with dimensions is conventionally denoted by the vector normal to the plane, , and offset (scalar) parameter . In the example above, we would define the hyperplane (or decision boundary) as:

Our classifier is thus equal to where and . Recall the sign function, also known as the signum function, is a mathematical function that returns the sign or direction of a real number. That is, if the input number is positive, negative, or 0, the sign function returns +1, -1, or 0, respectively.

Perceptron algorithm¶

In the perceptron algorithm, we typically initialize as zero (zero vector) and loop through the pair of training examples. At every iteration, we will check if the classifier makes a mistake classifying that training example (i-th example), and if so, then we update the parameters of .

Assume that for simplicity (the decision boundary must pass through the origin). Our perceptron classifier will make a mistake if . We will then update our to no longer misclassify that training example. The way to do this is by adding to the previous . Thus, the update would look like:

We have in hands a set of different training examples which have the potential to nudge/update our classifier in many directions. Thus, it is possible and even expected that the last training examples cause updates that will overwrite earlier, initial updates. This will result that earlier examples will no longer be correctly classified. For this reason, we need to loop through the whole training set multiple times to ensure that all examples are correctly classified. Such iterations can be performed both in order or randomly selected from the training examples.

We can code the algorithm as following:

import numpy as np

# Algorithm always starting to loop from x1

def perceptron(X, y, theta, t_times):

n_mistakes = 0

# Initialize list to show the progress (updates) of theta

progress_theta = []

# Initialize an array with same size as the total number of examples to count how many mistake are made at each training example

explicit_mistakes = np.zeros(shape=y.shape[0])

# Loop through the training set T times

for t in t_times:

# Loop through the training examples in order

for index, x in enumerate(X):

# Check if the algorithm makes a mistake in the i-th (or index-th) example

if y[index] * np.dot(theta, x) <= 0:

# Update theta to no longer misclassify the i-th example

theta = theta + y[index] * x

# Save the update theta

progress_theta.append(theta)

# Update total number of mistakes

n_mistakes += 1

# Update total number of mistakes at the i-th training example

explicit_mistakes[index] += 1

print('The perceptron did {} mistakes until convergence'.format(n_mistakes))

return progress_theta, n_mistakes, explicit_mistakes

if __name__ == '__main__':

X = np.array([[-1, -1], [1, 0], [-1, 1.5]])

# X = np.array([[-1, -1], [1, 0], [-1, 10]])

y = np.array([1, -1, 1])

t_times = range(0, 100)

theta = np.array([-1, -1])

a, b, c = perceptron(X, y, theta, t_times)Margin boundaries and hinge loss¶

As you may have noticed, the perceptron algorithm features no regularization term. The goal was simply to find any decision boundary that can split the data correctly. Here, we will introduce the concept of hinge loss and margin boundaries to transform the problem of learning a decision boundary into an optimization problem considering regularization.

Motivation behind margin boundaries¶

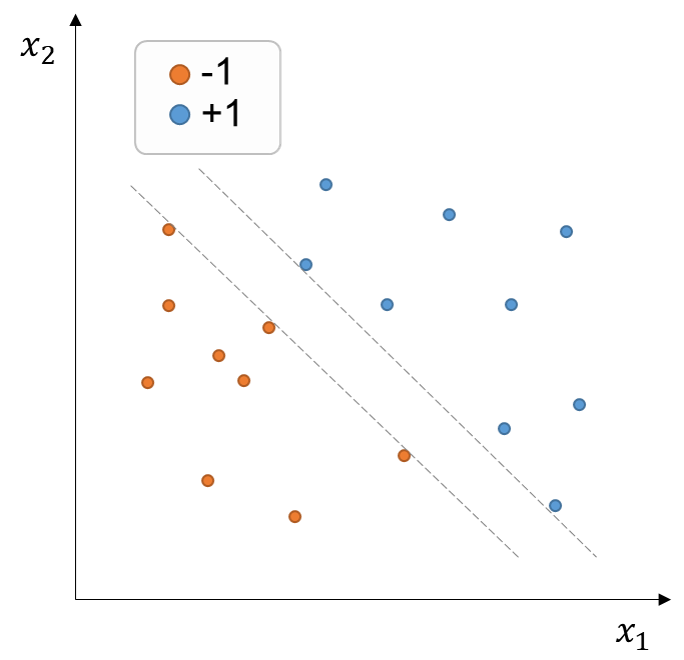

Let’s take a look at our previously presented training dataset (figure below). Any decision boundary within the dashed grey lines correctly splits the training examples. However, intuitively, we would like to favor a decision boundary that can maximize the distances between the decision boundary and the training points. The reason to do so is because it’s likely that the points we wish to classify in the future have statistical noise, such that a decision boundary located too close to the training examples is more likely to misclassify slightly changed (noisier) versions of the training examples. In contrast, a classifier that holds a relatively higher margin between the decision boundary and the examples will be probably more successful in classifying future, unseen data.

Figure 3:Training set of points with binary labels (+1, -1) in the two-dimensional feature space . Any decision boundary within the dashed grey lines can correctly split the data.

Optimization problem¶

Recall that our goal is to find a linear classifier that maximizes the distances between the decision boundary and the training points (margin linear classifier), but also minimizes the training error. Thus, this constitutes an optimization problem that needs to counterbalance these two factors, which we can state as:

The margins (distances between the decision boundary and the training points) should be maximized.

The training error should be minimized. We will express this in term of hinge loss.

Margin boundaries¶

Previously, we saw that the equation defining a decision boundary satisfies .

We can now define parallel margin boundaries (dashed line in the previous figure) as:

Note that we can define the boundaries like this because we have a degree of freedom in our definition of the decision boundary, namely, the magnitude of the normal vector . That is, regardless of the value , our decision boundary remains unaltered.

Recall the problem of computing the smallest distance of a point to a plane. This distance is:

We can now calculate the signed distance between the decision boundary and the i-th example as:

Thus, the distance between the margin boundaries and the decision boundary is:

Hinge loss¶

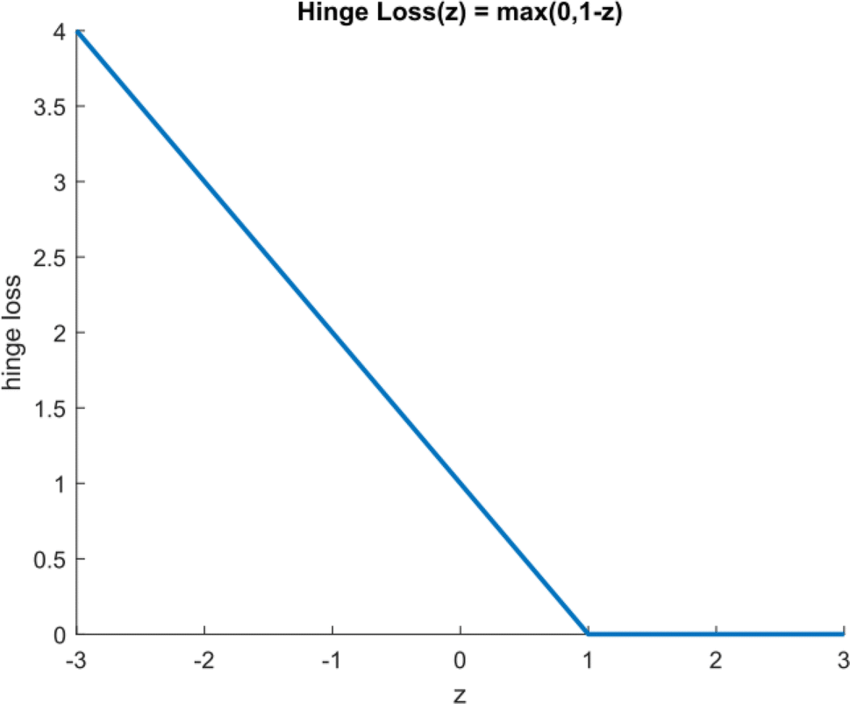

We so far know that will classify the i-th example. The way to know if the classification agrees with the label is by multiplying it by . We can express this agreement also in a slightly modified version, using the hinge loss:

where is the agreement (signed distance from the decision boundary) .

The figure below illustrates how the hinge loss operates along the z-axis (distance from boundary):

Objective function¶

So now we can create an objective function that (1) minimizes the average hinge loss over the training examples, and (2) maximizes . Expression (2) can be also reformulated towards minimizing . Thus we define the objective function as:

where is the regularization parameter that balances the importance of minimizing the regularization term at the cost of incurring more losses (increasing the loss term). Vice versa, the smaller the value of , the more emphasis we will give to minimizing average loss.